Extraction of essential features from the image

Extraction of a feature from the image is done on the basis of color and/or shape. The feature detection algorithm relies primarily on the color and intensity aspects.

The approach is described below :

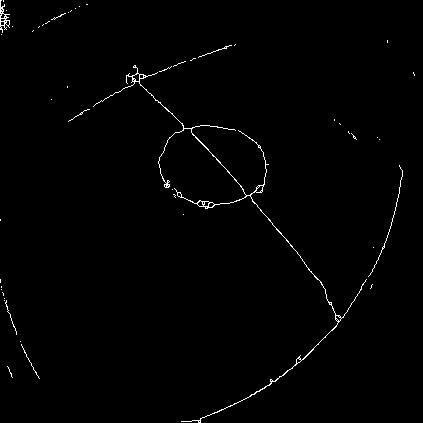

- The image is partitioned into different segments by an image processing technique called segmentation. Green colored foreground is thresholded and another image with only the field is generated.

- The generated field is then preprocessed by using Canny Edge Detection and Thresholding detection to detect the field lines,ball,and other opponents.

- Once the lines are detected the various other sub-features of the lines(ex. Crossing over ╬ (X) , common endpoints ╝(L), or a perpendicular beginning╠(T)) are detected using a technique known as Noding. The Nodes are placed along the lines in strategic locations to identify the X's and the T's. These nodes are connected using Nearest Neighbor (NN) approach optimised to fit the lines via a Raster line traversal algorithm.

For the detection of Ball, Blob detection is used. Local extrema are used to segregate the ball. The detected features are then sent to the localization thread for localisation.

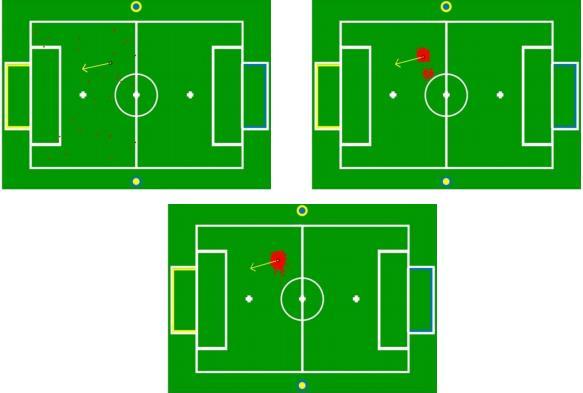

Monte-Carlo Localisation

This module enables the robot to determine its own position within the soccer field. Monte Carlo localization is used.

Particles for the localization model are randomly chosen within the field area and probability of each particle is calculated based on distance from observed landmarks. The Localization module serves to identify the global position of the humanoid using landmarks for computing a probability density function associated with the current predicted position.

After the detection of field lines was achieved on a fish-eye lens, the Localization module was updated to incorporate this information into its prediction. Some results of Monte Carlo Localization are given.